Improving user engagement and conversion rates on a website depends critically on A/B testing there. It’s a way to evaluate two webpage variants to find which one works better. This article will walk you through the processes to execute A/B testing successfully, so guaranteeing that you base your decisions on data to improve the performance of your website.

Step 1: Create Your Hypothesis

- Find the change: On your page, decide what you wish to modify and the reasons behind it. Users might not be clicking a button on the main page, for example.

- Goals of the Change: Try to see how tweaks in color or size could affect user behavior.

Step 2: Choose the test URL

- Decide which page is appropriate. The page where you intend to perform the test should show enough traffic over a two-week period or another suitable length.

Step 3: Define Your Goal

- Target Page: Choose a page, say a “Thank You” page, that the modification will influence.

- Site Activity: On the other hand, the objective can be a particular site event—a button click.

Setting Up A/B Testing in Plerdy

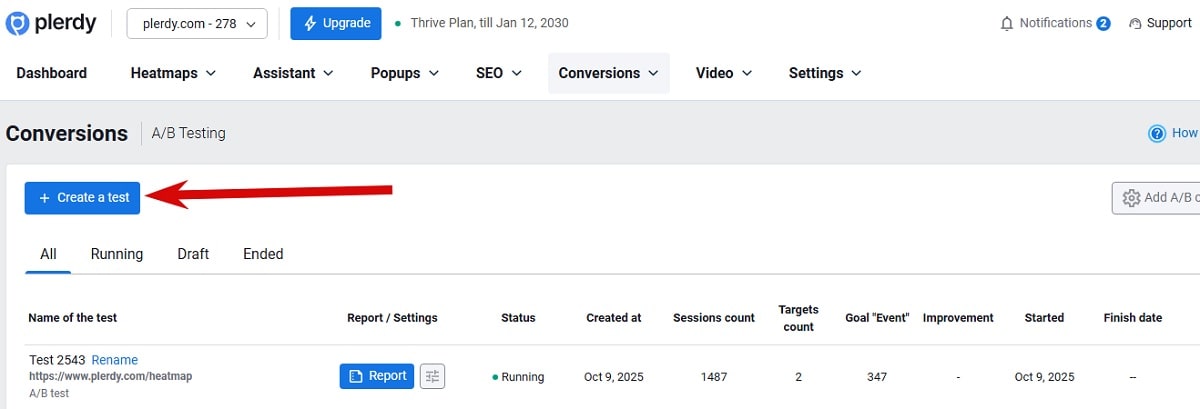

- Access A/B Testing: If you have a Plerdy account, click the blue “Create a test” button after visiting Conversions > A/B Testing.

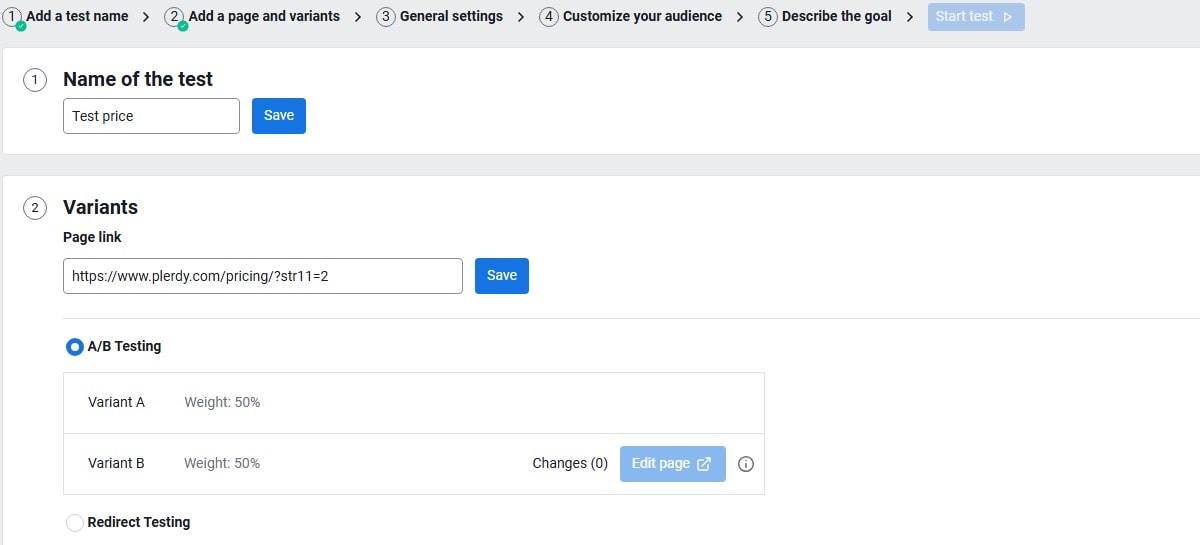

- Test Name: Enter a name for your test.

- Test URL: Add the URL where the test will run.

- Tracking Code: Go to “Settings,” copy the main and extra tracking codes, and add them to the necessary pages including the goal page should the “A/B testing script” not yet have additions. Clear the site cache then confirm the tracking code installation.

Test Variants and Settings

- End Date: Set when you plan to end the test. Alternatively, manually stop the test after 2-3 weeks if enough data is collected.

- Audience: Add rules if necessary, including countries and devices.

- Goals: Add the exact URL with https:// or part of the URL for tracking. Usually, this is the final page affected by Variant B.

- Events: Add a goal as an event, following instructions for class or ID. Note that event data aligns with the page view limit for heatmaps. Additional limits can be purchased if needed.

- Sending Events to GA4: Select the checkbox to send event IDs to GA4.

- Description: It’s advisable to add a note about what you changed and the test’s goal, to remember in 2-3 weeks.

Editing Variant B

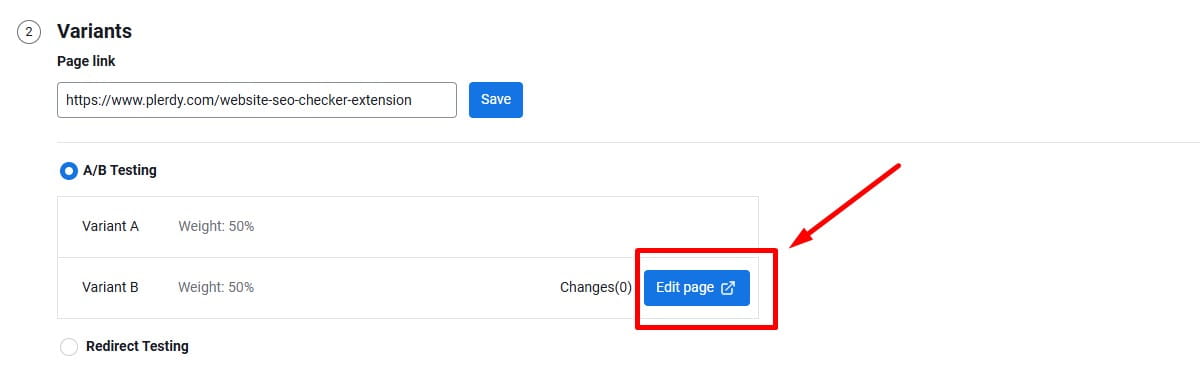

A/B Testing:

- Editing Page: Upon opening the Variant B editing panel, select the checkboxes for “Select element” and “Interactive Mode.”

- Make Changes: Apply 1-3 changes to an element such as color, size, or hiding it. You can also edit HTML content like links or images.

- Save Changes: Save individual element changes, and use the “Save all changes” button to save all test modifications.

Edit Page: This option is used when you want to change specific elements on the current page without creating a separate URL for Variant B. It works well for small A/B tests such as changing a button color, headline text, spacing, icon, or image. In most cases, this is the best choice when you want to test one clear hypothesis and measure how users react to a focused UI change. It is faster than rebuilding a full page and helps isolate which exact edit improved conversions.

Below is a simple CSS example for A/B testing a button color on the page:

.cta-button {

background-color: #EE5622 !important;

border-color: #EE5622 !important;

color: #ffffff !important;

}If there are several buttons with the same class, it is better to use a unique attribute when available. This helps you change only one target button instead of all buttons on the page.

<a class="cta-button" plerdy-tracking-id="50130403501">Buy Now</a>a[plerdy-tracking-id="50130403501"] {

background-color: #EE5622 !important;

border-color: #EE5622 !important;

color: #ffffff !important;

}You can also target only the second link inside the same wrapper by using a child selector. This is useful when both buttons have the same class and no unique attribute is available.

<div class="bbbb">

<a class="cta-button" href="#">Variant A</a>

<a class="cta-button" href="#">Variant B</a>

</div>.bbbb > a:nth-child(2) {

background-color: #EE5622 !important;

border-color: #EE5622 !important;

color: #ffffff !important;

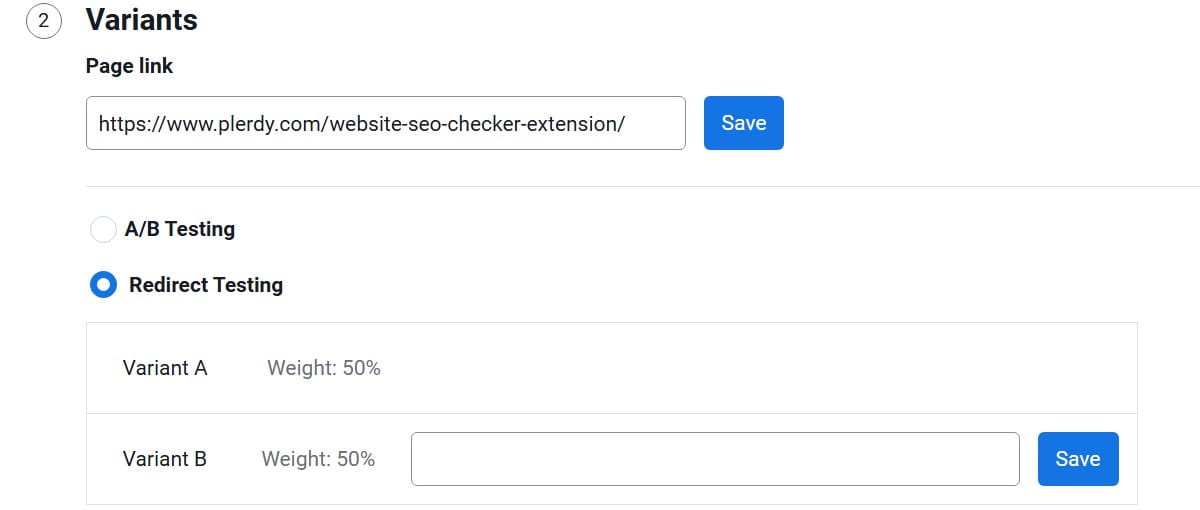

}Redirect Testing:

Use this option when Variant B is a separate new page instead of edited elements on the original page.

- When to Use It: Redirect Testing works best when you plan many changes at once, for example on a homepage, product page, category page, or another important landing page.

- How It Works: In this case, users who land on Variant A are redirected to the new test page created as Variant B, so you can compare the original page with the redesigned version.

- Why It Helps: This format is useful when small element edits are not enough and you need to test a fully updated page concept.

Launching the Test

- Review Settings: Open the test settings page in a new tab and refresh.

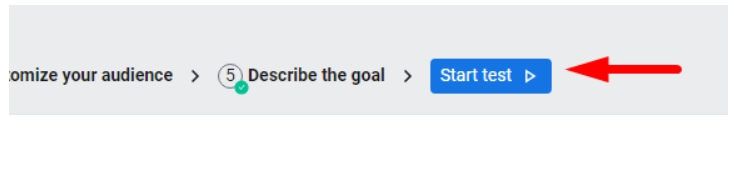

- Start the Test: Click the “Start test” button. You can also view all changes made to the website page by selecting “Show all changes.”

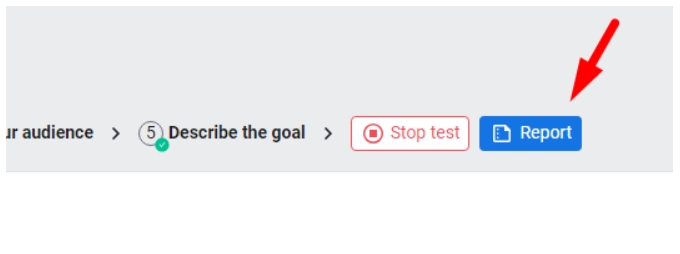

Analyzing A/B Testing Results

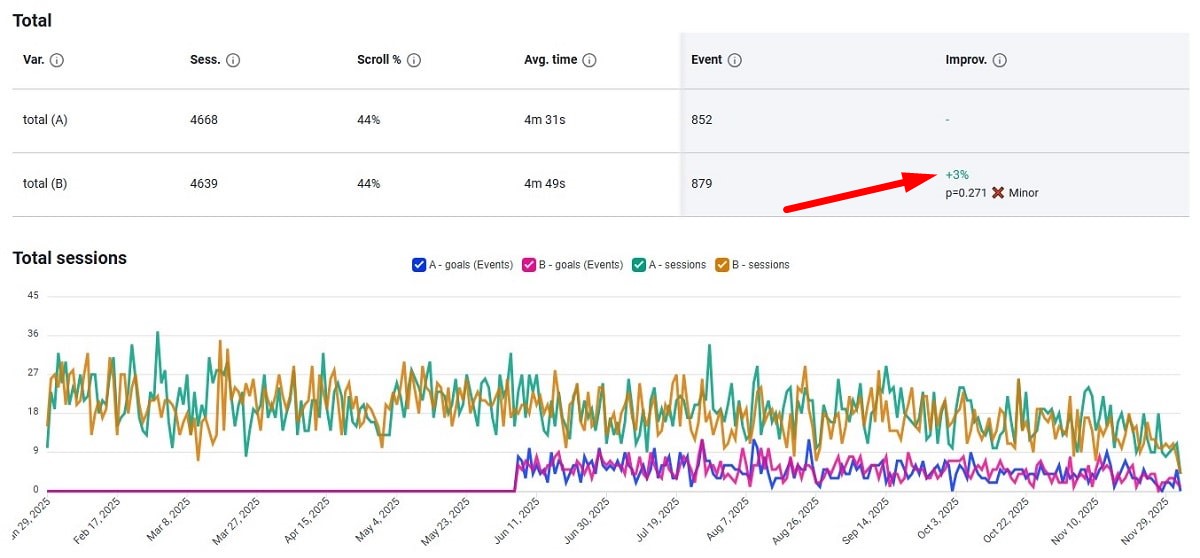

- Session Distribution: Every variant gets almost 50/50% user sessions.

- Key Metrics: Search the Total Sessions tab’s “Improvement” column for the winning version.

- Device Analysis: Review which device most affected the winning variant.

- Traffic Analysis: Find out which traffic channel worked best for you.

Interpreting Negative Values for Variant B

Should Variant B exhibit a negative value in the improvement metric, Variant A might outperform Variant B. Here, you have two choices:

- Wait a Few More Days: Sometimes low traffic or initial user behavior modification could cause first results to not show the actual performance. A few more days of waiting will supply more information necessary for a certain conclusion.

- Take Variant B into account. Unsuccessful: One could be safe to assume that Variant B is underperforming relative to Variant A if the negative trend keeps regularly throughout a notable duration. Under such circumstances, it is advisable to examine the aspects of Variant B that might be generating the performance drop and take into account either changing or eliminating the modifications done in this variant.

Tips for Checking Variant B

- Multiple Browsers: Check Variant B using many browsers since you cannot see both variations in the same browser session in half an hour.

Final Thought

One effective instrument for improving a website is A/B testing. These guidelines will help you to make wise judgments grounded on user preferences and behavior. Recall that effective A/B testing depends on ongoing learning and adaptation. delighted testing.