User testing is key to making products user-friendly. This guide shows its importance, methods, and uses. We explain how to set up tests and make sense of the results. This ensures your product connects well with users. The guide mixes expert advice with real examples for clear guidance. It’s useful for both experienced and new designers. It improves your skills in user testing, which is vital for product success. Plerdy’s tools can further boost your user testing skills, providing valuable insights. Let’s continue reading the article.

User Testing: A Prerequisite for User-Centric Products

In the realm of product development, user testing emerges as a cornerstone. It’s not just a procedure; it’s a strategic approach to ensure products align with the needs and expectations of end-users. User testing goes beyond mere assumptions, diving into how real people interact with your product, revealing insights that are often overlooked during the design phase.

The Essence of User Testing

At its core, user testing is about validating the usability and effectiveness of a product. Whether it’s a website, an app, or any digital tool, the goal is to understand how actual users perceive and interact with the product. This process is critical for identifying and resolving any usability issues before the product reaches the broader market.

The user-centeredness of user testing is crucial. It prioritizes user needs over designer or developer preferences. This adjustment is crucial in a market where user experience determines product success.

Different Types of User Testing

User testing can take various forms, each suited to different stages of the product development cycle and addressing different aspects of user interaction.

- Usability Testing: This is the most direct form of user testing. Here, participants are asked to complete tasks while observers note where they encounter problems or experience confusion. Usability testing can be both moderated (with a guide) or unmoderated (where users interact with the product independently).

- A/B Testing: Often used in web design and marketing, A/B testing involves presenting two product versions to users. Each version has slight variations, and the goal is to see which version performs better regarding user engagement and satisfaction.

- Surveys and Interviews: These methods gather qualitative data from users. They are particularly useful for understanding user attitudes, experiences, and satisfaction levels with your product.

- Beta Testing: This occurs in the final stages of development. A product is released to a selected group of users under real conditions to identify any last-minute fixes or improvements.

Benefits of User Testing

The benefits of user testing are manifold. It significantly reduces the risk of product failure by identifying potential issues early. It also ensures a higher level of user satisfaction, leading to better engagement rates, increased loyalty, and positive word-of-mouth. Moreover, user testing can lead to innovative ideas and improvements, as feedback from actual users provides a different perspective that can be invaluable for the product development team.

In conclusion, user testing is not just a step in the product development process; it’s a crucial element that can dictate the success or failure of a product. By understanding and effectively implementing user testing, businesses can significantly enhance user satisfaction and ultimately, the product’s success in the market.

Exploring Diverse Methods of User Testing

User testing, a crucial component of product development, encompasses various methods, each tailored to capture distinct user interactions and feedback. Understanding these methods is vital for selecting the right approach based on your product’s specific needs and development stage.

1. Usability Testing: The Cornerstone of User Testing

Usability testing involves real users performing tasks under observation to identify any usability issues. This method is vital for understanding how intuitive and user-friendly a product is.

- Moderated Usability Testing: Using this method, a moderator will lead the participants and ask them questions in a controlled setting. It provides deeper insights as the moderator can probe further based on user responses.

- Unmoderated Usability Testing: This can be easily scaled and is more versatile. Users engage with the product in their natural habitat, frequently utilizing technologies such as Plerdy, which may capture sessions and collect statistics on user activity autonomously.

- Remote Usability Testing: Particularly useful in today’s digital landscape, remote testing employs online tools to observe user interactions. This method saves time and resources while offering a broader, more diverse user base.

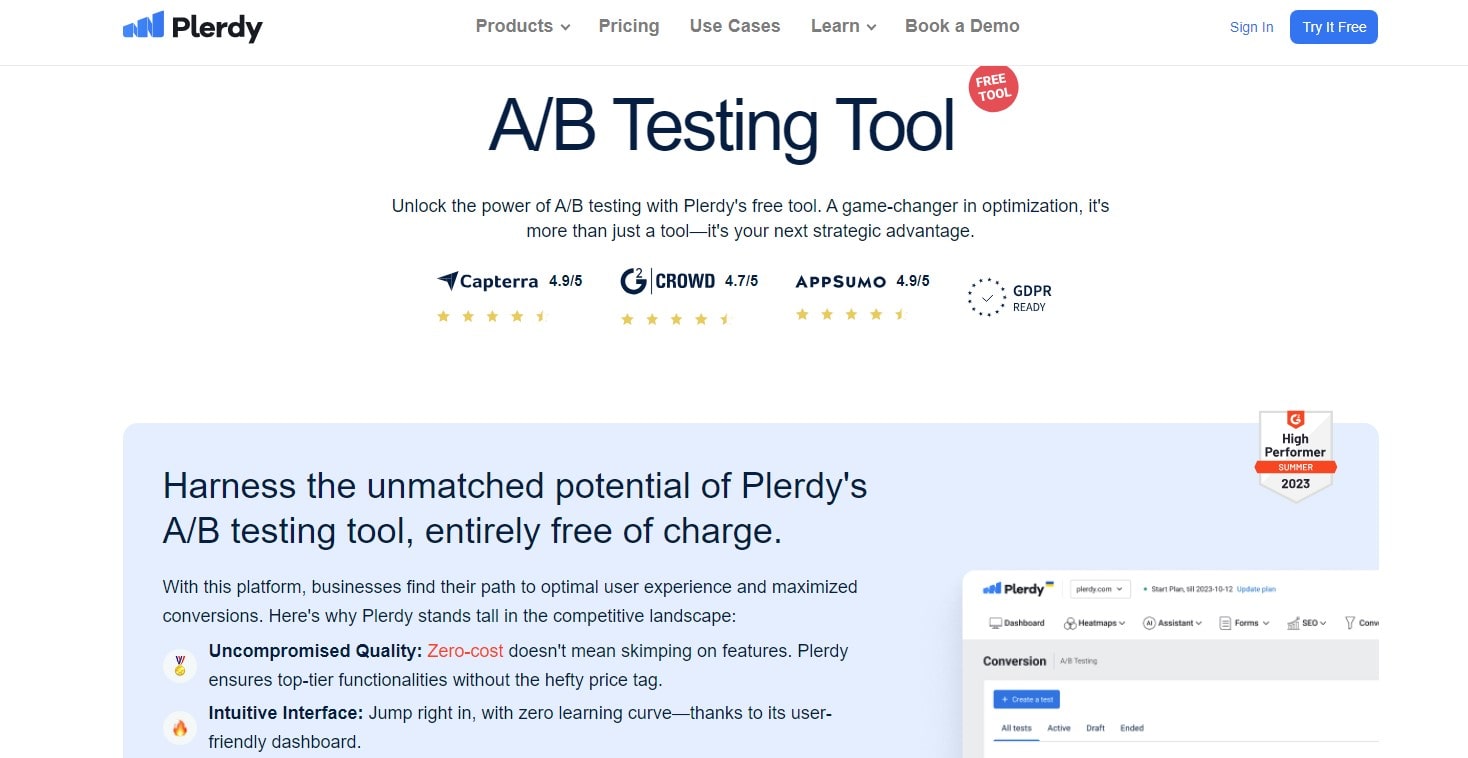

2. A/B Testing: Comparing to Optimize

A/B testing involves presenting two variants of a product to different user groups and analyzing which version performs better. It’s commonly used in website development to test elements like layouts, content, and calls to action.

- Implementing A/B Testing with Plerdy: Tools like Plerdy can track user interactions with different web page versions, providing clear data on which version resonates better with the audience.

3. Surveys and Interviews: Direct User Feedback

Surveys and interviews are traditional yet powerful tools for user testing. They allow direct feedback from users, providing qualitative data on user experiences, preferences, and issues.

- Surveys: These are structured questionnaires that users can fill out after using a product. They are excellent for gathering broad feedback from a large user base.

- Interviews: More in-depth than surveys, interviews involve one-on-one sessions with users, offering deeper insights into user experiences and attitudes.

4. Beta Testing: The Final Checkpoint

In the run-up to a product’s release, beta testing is the last stage of testing. It involves releasing a near-complete product to a select group of users to identify any last-minute issues in a real-world scenario. Beta testing helps in fine-tuning the product based on real user data.

5. Eye-Tracking Studies: Understanding Visual Engagement

Eye-tracking involves studying where and how users look at a screen while interacting with a product. This strategy helps optimize design aspects for user engagement by revealing user focus.

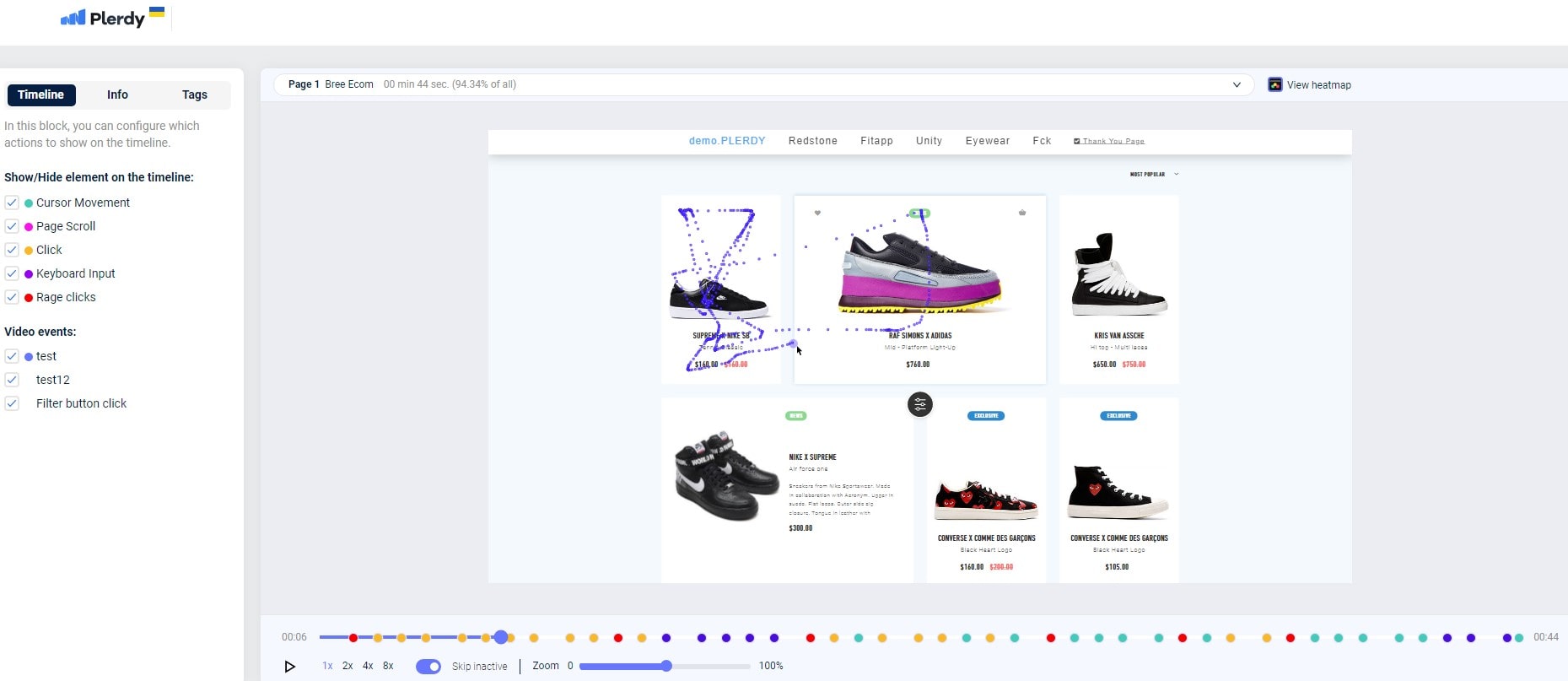

6. Heatmaps and Session Recordings with Plerdy

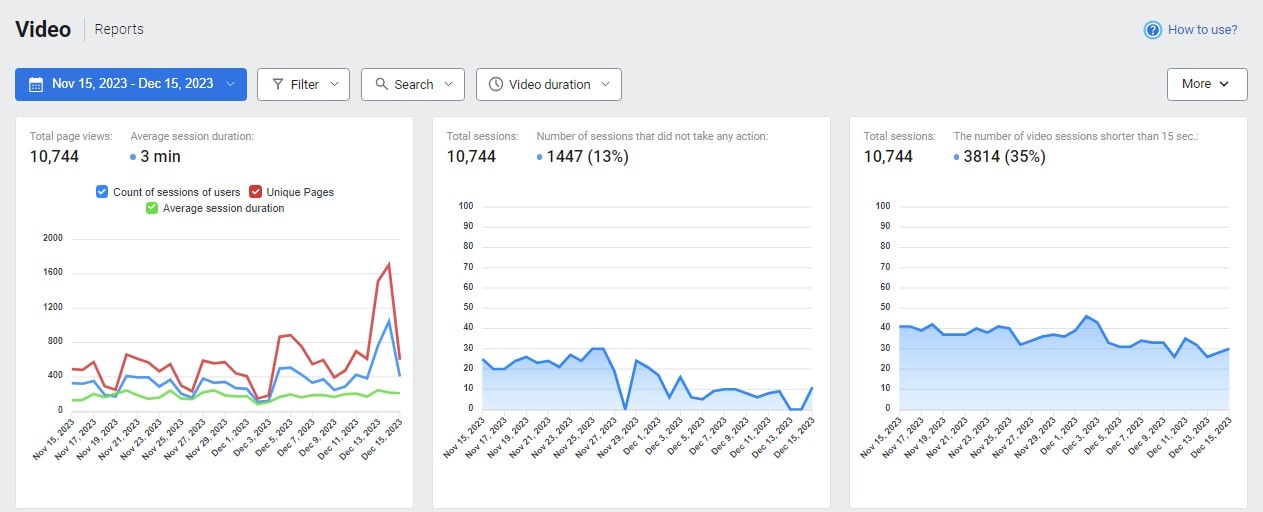

- Heatmaps: Tools like Plerdy offer heatmap functionality, showing where users click, move, and scroll on a page. This visual data helps understand user behavior and preferences, guiding website design and functionality improvements.

- Session Recordings: Plerdy’s session recordings capture real user interactions, offering a comprehensive view of how users navigate a site. This method is invaluable for identifying usability issues and areas for improvement.

7. Card Sorting: Organizing Content Effectively

Card sorting reveals how people organize information. Users are asked to organize content into groups that make sense to them. This method is particularly useful for structuring website navigation and content hierarchy.

8. Task Analysis: Breaking Down User Interaction

Task analysis involves breaking down each step a user takes to complete a task. This detailed examination helps identify pain points and areas where users struggle, providing critical insights for improving task flows.

9. Guerrilla Testing: Quick and Informal Feedback

Guerrilla testing is a low-cost, informal method where testers approach people in public places like cafes to try out a product. It provides quick and spontaneous feedback, though it may lack the depth of more structured methods.

10. Prototype Testing: Early Stage Feedback

Prototype testing involves users interacting with a prototype or early version of a product. This early-stage testing is crucial for getting initial feedback and making changes before further development.

11. Focus Groups: Collective User Insights

Focus groups are moderated by researchers and involve a few users discussing the product. This method provides insights into user attitudes and how users perceive a product in a social context.

12. Asynchronous Testing

Asynchronous testing is a versatile and efficient user testing method, particularly beneficial for gathering feedback across different time zones and schedules. Unlike traditional methods, it doesn’t require simultaneous participation from users and evaluators. This method works well for websites and apps, since consumers can utilize them at will.

Participants complete tasks independently in asynchronous testing, often with tools tracking their interactions. This method provides authentic user data, as users are in their natural environment, free from the influence of a moderator. Tools like Plerdy can be instrumental here, capturing user behavior through heatmaps and session recordings. This data helps discover usability flaws and areas for improvement by showing how users utilize a product.

The key advantage of asynchronous testing lies in its flexibility and scope for extensive data collection. It allows for a broad, diverse user base to participate, making the feedback more representative of the general user population. However, it requires a well-structured testing plan and clear instructions to ensure that the data collected is relevant and useful.

13. Expert Reviews and Heuristic Evaluation

Expert reviews and heuristic evaluations are analytical methods of user testing, where usability experts review a product based on established principles and heuristics. This method is excellent for early product development and short evaluations.

Heuristic evaluation involves experts examining the product and identifying usability issues based on established criteria like Jakob Nielsen’s Ten Usability Heuristics. These heuristics include user control, consistency, error prevention, and efficiency of use. Experts analyze the product, looking for deviations from these principles and suggesting improvements.

Expert reviews, on the other hand, are more subjective and depend on the experience and perspective of the reviewer. They involve a comprehensive product analysis, considering factors like user experience, accessibility, and overall design. Experts provide detailed feedback and actionable recommendations, drawing from their experience and industry best practices.

Both methods offer a quick turnaround and can be cost-effective, as they don’t require recruiting multiple test users. However, the experts’ perspectives limit their perspectives and may not fully capture the end user’s experience. Thus, while expert reviews and heuristic evaluations provide valuable insights, they are often used in conjunction with other user testing methods for a more rounded understanding of user experience.

User testing is not a one-size-fits-all approach. Each method offers unique benefits and insights. Tools like Plerdy enhance these methods by providing in-depth, actionable data, making it easier for businesses to understand and cater to their users’ needs effectively. The choice of user testing method depends on the product stage, goals, and resources. By combining different methods, you can comprehensively understand your users, leading to a more successful, user-centric product.

Crafting a Blueprint for Successful User Testing

Effective user testing hinges on meticulous planning and preparation. This phase sets the foundation for insightful and actionable results. Below, we dive into the critical components of this process, ensuring a structured and efficient approach to user testing.

- Establishing Clear Objectives: The first step in planning is to establish clear, measurable objectives. What do you want to learn from the user testing? Are you looking to improve usability, validate a design concept, or identify user pain points? Setting specific goals helps determine the suitable testing method and guides the creation of task scenarios.

- Defining User Personas: Understanding who your users are is crucial. Develop detailed user personas representing your target audience. These personas should include demographic information, user behaviors, needs, and goals. This understanding ensures that the testing is focused and the feedback is relevant.

- Selecting the Right User Testing Method: Select the most appropriate user testing method based on your objectives and user personas. Each method, be it usability testing, Plerdy A/B testing, or heuristic evaluation, serves different purposes and offers unique insights. Sometimes, a combination of methods yields the best results.

- Creating Task Scenarios: Develop realistic task scenarios that users will undertake during the testing. These tasks should be representative of typical user interactions with the product. They need to be clear, achievable, and relevant to the objectives of the test.

- Recruitment Strategy: Recruiting the right participants is key. Develop a recruitment strategy that targets users who closely match your user personas. Consider factors like age, technical proficiency, and familiarity with similar products. Ensure a diverse group to get a broad range of insights.

- Preparing the Test Environment: The setup of your test environment should reflect where and how users will typically use the product. For digital products, this might mean testing on various devices and browsers. For physical products, consider the environment in which the product will be used.

- For authentic user behavior, the setting should be natural.

- Pilot Testing: Before conducting the actual user testing, perform a pilot test. This involves running the test with a few participants to identify any issues in the test design, such as unclear instructions or technical glitches. It helps in refining the test setup for optimal results.

- Developing a Test Plan: A comprehensive test plan is essential. It should outline the methodology, participant criteria, task scenarios, and logistics of the testing process. This plan serves as a roadmap for the testing process and ensures consistency and focus throughout the test sessions.

- Training the Test Team: Ensure that your team, including moderators and observers, are well-trained and understand their roles in the testing process. They should be familiar with the test plan, objectives, and how to interact with participants, especially in the context of moderated testing.

- Setting Up Data Collection Methods: Decide on how you will collect and record data. This might include video recordings, note-taking, screen-capturing tools, and session recording software. Ensure that the methods chosen are non-intrusive and respect participant privacy.

- Communicating with Participants: Clear communication with participants is crucial. Provide them with all necessary information about the test, including its purpose, what is expected of them, and how their data will be used. Ensure to obtain their consent, especially for any recordings.

- Scheduling and Logistics: Plan the scheduling of the test sessions, considering the availability of participants and the test team. Ensure that the testing environment is ready and all equipment is functioning properly. Factor in enough time for each session, including setup and debriefing.

- Preparing Analysis Tools: Prepare the tools and frameworks you will use for analyzing the data collected. This might include software for analyzing qualitative data, spreadsheets for quantitative data, or Plerdy tools for analyzing heatmaps and user journeys.

- Anticipating Challenges: Identify potential challenges that could arise during testing, such as technical issues, unresponsive participants, or data collection problems. Develop contingency plans to address these challenges efficiently.

A well-planned and prepared user testing process is pivotal for gaining meaningful insights. It ensures efficient, reliable, and actionable testing. By meticulously following these planning and preparation steps, you set the stage for a successful user testing initiative that can significantly enhance the user experience of your product.

Implementing User Testing: A Roadmap to Meaningful Insights

Executing user tests is a critical phase where planning converges with real-world application. It’s where theories and assumptions meet the unpredictability of human interaction. This section provides a comprehensive guide on how to conduct user tests effectively.

- Setting the Stage: Before the actual test, ensure the environment is conducive to testing. For digital products, check the technical setup, including software, internet connectivity, and recording tools. For physical products, create a realistic environment that mimics where the product would be used. Comfortable and familiar settings can elicit more natural responses from participants.

- Welcoming Participants: Start with a warm welcome to put participants at ease. Explain the purpose of the test clearly, reaffirming that the product is being tested, not their skills or knowledge. Transparency about the process and reassurance about privacy concerns can significantly improve the quality of feedback.

- Clear Instructions: Provide clear, concise instructions. Ambiguity can lead to confusion and skewed results. Participants should understand what’s expected of them. If the test involves completing tasks, ensure instructions are straightforward and allow participants to ask questions.

- Observing and Recording: Observe their behavior, expressions, and verbal cues as participants interact with the product. Note-taking, audio recording, and video capture are essential for gathering comprehensive data. Tools like screen recording software can be invaluable for digital products, capturing interactions, mouse movements, and clicks.

- Encouraging Think-Aloud Protocol: Instruct participants to verbalize their thoughts as they use the product. The think-aloud protocol is a powerful tool to understand the user’s thought process, providing insights into their experience, expectations, and any confusion they encounter.

- Non-Interference and Support: While it’s crucial to offer support, avoid leading the participant. Intervention should be minimal to ensure authentic user behavior. However, if a participant is clearly struggling, provide the necessary assistance to keep the test moving.

- Handling Feedback: Encourage honest feedback, both positive and negative. Create an open atmosphere where participants feel comfortable sharing their true opinions. Remember, constructive criticism is more valuable than unfounded praise in user testing.

- Post-Test Interview: After the test, conduct a brief interview to gather additional insights. Ask about their overall experience, what they liked or disliked, and any suggestions they might have. This can reveal insights that weren’t apparent during the test itself.

- Managing Time Efficiently: Each session should be time-boxed to maintain focus and prevent fatigue. However, allow some flexibility for deeper exploration if a participant provides interesting insights. Balance is key to effective time management during user tests.

- Data Collection and Management: Ensure all data collected is systematically organized for analysis. Having well-organized data simplifies the subsequent analysis process, whether it’s video files, audio recordings, or notes.

- Respecting Privacy and Consent: Adhere to privacy laws and ethical standards. Ensure all participants have consented to the recording and use of their data. Be transparent about how the information will be used and stored.

- Inclusivity in Testing: Include a diverse group of participants to ensure the test results are representative of your broader user base. Consider factors like age, gender, cultural background, and tech-savviness to get a holistic view of user experiences.

- Iterative Testing: Consider conducting several rounds of testing, especially for more complex products. Initial tests can uncover major issues, while subsequent tests can delve into more specific aspects after initial improvements are made.

- Flexibility and Adaptability: Be prepared to adapt the testing process if something isn’t working. Flexibility can lead to more effective testing and better data collection.

- Synthesizing Observations: After each session, take time to synthesize observations. Discuss findings with the team to identify patterns and unique insights. These immediate impressions can be invaluable when combined with more thorough data analysis later.

- Post-Test Debrief with Team: Conduct a debrief with your team after the testing phase. Discuss overall impressions, surprising findings, and potential changes. This collective reflection can provide a more nuanced understanding of the data collected.

Executing user tests effectively requires a balance of meticulous planning, adaptability, and a keen eye for detail. It’s a process that demands both empathy and analytical skills. Following these procedures will ensure your user testing provides important information and audience-relevant products. Remember, the goal of user testing is not just to validate your ideas, but to challenge them, ultimately leading to a product that meets and exceeds user expectations.

Transforming Data into Actionable Insights

The culmination of user testing lies in analyzing and applying the gathered data. This crucial phase transforms raw observations into actionable insights, steering product development in a user-centric direction.

- Compiling and Organizing Data: Begin by compiling all data collected during the tests. This includes notes, recordings, survey responses, and any quantitative data. Organize this information methodically to streamline the analysis process. Digital tools can help categorize and sort the data for easier access and interpretation.

- Initial Data Review: Conduct an initial review to get a general sense of the findings. Look for obvious patterns, repeated comments, or glaring issues that stand out. This initial sweep helps identify major focus areas for a more in-depth analysis.

- Quantitative Analysis: Quantitative data, such as task completion rates, time-on-task, and click-through rates, provide objective usability and user engagement measures. Use statistical tools to analyze this data, looking for trends and significant deviations. This analysis can reveal areas where users consistently encounter problems.

- Qualitative Analysis: Qualitative data offers deeper insights into user behavior, preferences, and attitudes. Analyze comments, feedback, and behaviors noted during the tests. Look for recurring themes or sentiments that indicate user satisfaction, confusion, or frustration. Qualitative analysis often reveals why users behave in certain ways.

- Identifying Usability Issues: Focus on identifying specific usability issues uncovered during testing. Classify these issues by severity – are they critical blockers or minor nuisances? Understanding the impact of each issue will guide prioritization in the development process.

- Cross-Referencing with Objectives: Cross-reference your findings with the initial objectives of the test. Did the results answer your questions? How do they align with or contradict your expectations? This comparison ensures that the analysis stays focused on your testing goals.

- Synthesizing Findings: Synthesize the data into a coherent narrative. Combine quantitative and qualitative insights to paint a complete picture of the user experience. This synthesis should highlight key issues and opportunities for improvement.

- Creating Actionable Recommendations: Transform findings into actionable recommendations. For each identified issue, propose clear, practical solutions or improvements. These recommendations should be specific, feasible, and aligned with the overall product strategy.

- Prioritizing Actions: Prioritise issues by severity and user experience. Address critical usability issues that hinder basic functionality first. Then, move on to enhancements that can elevate the overall user experience.

- Reporting to Stakeholders: Prepare a comprehensive report for stakeholders, summarizing the testing process, findings, and recommendations. Use visuals like graphs, Plerdy heatmaps, or video clips to illustrate key points. This report should convey the value of the user testing process and justify the proposed changes.

- Implementing Changes: Work with the development and design teams to implement the recommended changes. Ensure that the solutions are executed as intended and monitor their impact. This step may involve further testing to validate the effectiveness of the modifications.

- Continuous Improvement: User testing is not a one-time event but a continuous process of improvement. Use the insights gained to inform future development cycles. Establish a culture of ongoing testing and iteration to enhance the user experience continually.

- Monitoring Post-Implementation: After implementing changes, monitor user feedback and performance metrics to assess the impact. This ongoing monitoring can reveal if the changes have successfully addressed the issues or if further adjustments are needed.

- Learning for Future Projects: Document learnings and insights from the testing process. These should include best practices, unexpected findings, and areas for improvement in the testing process itself. This documentation can be invaluable for future projects, helping to avoid past mistakes and leverage successful strategies.

Analyzing and applying test results is a complex but rewarding process. It requires a careful balance of analytical rigor and creative problem-solving. You can significantly improve your product’s usability and user satisfaction by thoroughly examining user testing data and applying the insights gained. This phase is not just about fixing problems; it’s about learning, evolving, and fostering a deep understanding of your users to create products that truly resonate with their needs and expectations.

Conclusion

User testing is vital. It connects what users expect and the final product. Careful planning and execution, along with deep analysis, make it powerful. It turns good products into great ones. This process improves usability and our understanding of users. Continuously adapting products like Plerdy based on feedback is key. This creates solutions that truly meet user needs. Plerdy is also free for many sites. Successful user testing transforms feedback into real improvements. It shapes products into something users need and love.