Explore the fascinating subtleties of usability testing and user testing as applied in product development. These two approaches take different pathways even if their main objective is to improve the user experience.

- User testing centers on a user’s interaction with a good or service. It guides the development process depending on real user comments, much as a tour guide.

- Conversely, usability testing is like a conscientious investigator closely examining the design and interface of the product in order to guarantee its flawless performance.

Learn these several approaches and harmonize them for your testing. Find the ideal balance to stretch the possibilities of use and efficacy of your product. Learn the magic of Plerdy, a great tool meant to improve your User Experience (UX) and Conversion Rate Optimization (CRO). Let Plerdy provide you strong ideas to improve the performance of your product.

Don’t settle for mediocracy; aim for flawless usage of your product. Remember, success is found in the little details; hence, the skill of testing can help you to transform these small elements into major successes for your product! Set off this illuminating trip right now.

What is User Testing?

Imagine a conductor expertly guiding the performance of the symphony – this is user testing in the field of product development. It’s the process genuine users engage with a product, and their comments and experiences help to define its development. Imagine a streaming app: user testing guarantees that the content, layout, and operation of the app appeal to the audience in the proper balance.

Like this:

- Potential users interact with the product either completing specified activities or just exploring capabilities.

- Data floods in during these contacts, providing priceless insights on the users’ experience, challenges, eureka moments.

- User testing is like lifeblood—this feedback is it. It clarifies the road to perfecting the product, removing flaws, and boosting strengths by means of elimination.

User testing is comparable to a mirror reflecting from the users’ point of view the usability of the product. For fitness apps and e-commerce systems, it links user expectations with product design. It can turn a user’s confused path from a maze into a seamless, fun trip. Fasten your seatbelts, start the engine, and welcome user testing as your road map for creating premium, user-approved goods. Get ready for this amazing trip and see how successful your merchandise will fly down the highway!

Why do you Need User Testing?

Enter the realm of product creation and user testing is clearly a superpower. It’s the compass helping you to produce goods that appeal to users, increase involvement, and propel success. Why, though, does it occupy such a central role?

Imagine a software company starting a ground-breaking health app. Product user testing would expose where users stumble, what they find weird, and what they find simple. It reveals the road to better navigation, simplified features, and an interesting user interface, not only points up obstacles.

These strong arguments justify including user testing into your process of product development:

- User testing helps to identify what users need and want, thereby opening the path for pertinent and powerful products.

- It’s the counterpoint to tunnel vision. Capturing many user viewpoints helps to avoid design and usability blind spots.

- It provides hard-hitting statistics to guide development with confidence, reduce guessing, and help you make deliberate design decisions.

Imagine a well-known online retailer not doing user testing. They might produce an excessive interface, unclear navigation, and finally – a decline in user happiness. However, by including user testing, they may transform possible risks into benefits, hence increasing user involvement and profitability.

User testing is, all things considered, the lighthouse pointing you toward the creation of goods adored as well as used. Use user testing right now to ignite the possibilities of your product.

What is Usability Testing?

Imagine a detective painstakingly examining every element to crack a case. In product development, that is usability testing. It’s the analytical study of users’ interactions with a good and their degree of efficiency of usage.

Consider a smartphone banking app. Usability testing could show how easily the bill-paying process is used or point out where people find themselves caught when moving money.

Usually, usability testing consists of:

- Track people as they use your product, noting areas of strength and weakness.

- Task analysis: Find out users’ times for finishing particular tasks and their process error count.

- Feedback on user satisfaction about your product will provide priceless information on areas that might want development.

Testing usability for your product is like having a health check-up. It reveals both of its weaknesses and virtues. Without it, an online merchant could offer a tiresome checkout procedure or an education platform may have unclear navigation, which would irritate users and lower interest. But with it, they can adjust their systems for flawless and fun user encounters.

Simply said, successful product development and user-centric design depend much on usability testing. Now is time to get ready and include usability testing into your product playbook. Ready, set, walk!

Why do you Need Usability Testing?

Usability testing is like a magnifying glass in the field of product development; it sharpens the better points and defects of your product. Why is this crucial? Imagine a fledgling SaaS startup with a ground-breaking idea. They may start with a confusing interface or complex processes without usability testing, which would cause user annoyance and a declining reputation.

Here’s why your golden rule in product development should involve usability testing:

- Finding design and functional flaws early on helps prevent user bottlenecks from developing. Recall that the optimum path is a seamless user one.

- Increasing the Bottom Line Perfecting usability helps you raise user satisfaction, build loyalty, and—along with that—raise the profitability of your product.

- Surpassing the resistance: Perfect user experience lends you a competitive advantage. Users that like utilizing your product stay around and tell others.

Think of one of the well-known e-learning systems. Should they neglect usability testing, their course of action may be chaotic or their sign-up process may be difficult, driving possible students running away. But usability testing helps them to shine their platform to a great gloss, thereby attracting students and creating conditions for success.

Usability testing is essentially the strong armor protecting your product from underperformance and user discontent. Thus, get ready to include usability testing into the path of success of your product! Let the ball start rolling.

How Plerdy Helps With User Testing and Usability Testing

Successful product development depends mostly on Plerdy, a thorough tool for user and usability testing. It’s the secret friend an online clothes company or a modern mobile game developer needs to properly grasp and improve their user interface.

Here is a taste of Plerdy’s powers:

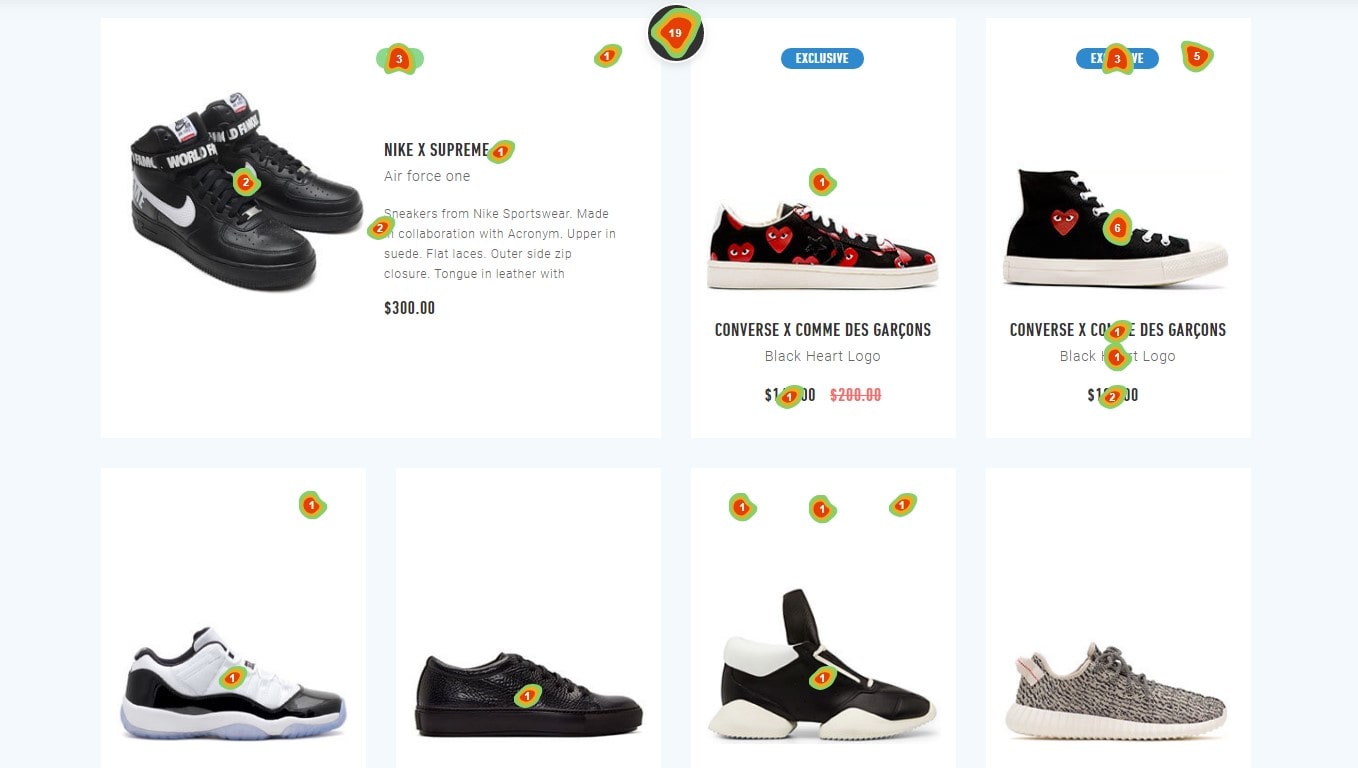

- On point Plerdy’s heatmaps provide a graphic picture of user behavior. Hotspots highlight areas of customer engagement, so enabling you to highlight the features of your product.

- Plerdy’s comprehensive event tracking tool lets you see how users engage with various components of your product, therefore guiding and improving these interactions.

- Detailed Form Analysis: Plerdy evaluates your forms, pointing up areas that create conflict, thereby helping you to smooth out any process flaws.

Think about a newly developing music streaming service. Using Plerdy will help them to get important understanding of user interaction with features, content, and platform navigation. These results can subsequently be put to use in practical enhancements meant to enhance user experience.

In the big picture of product development, Plerdy is the navigational lighthouse pointing you toward unmatched user pleasure. Having Plerdy at your side will enable you to produce really beautiful items rather than just useful ones. Time to gear up and sprint towards product greatness using Plerdy!

What is the Difference Between User Testing and Usability Testing?

Let us explore the core of two important aspects of product development: usability testing and user testing. These twins of the testing domain differ in the details even if their shared objective is improving user experience.

User testing sets out to assess the product from actual user perspective. Imagine an e-commerce shop starting a line of athletic wear. Here, user testing entails assembling possible users, tracking their engagement with the site, and compiling direct comments. In this instance, the emphasis is on comprehending users’ needs, preferences, and platform journey they follow.

Conversely, when it comes to determining how “user-friendly” a product is, usability testing takes front stage. Usability testing would assess users’ ease of monitoring their exercises, negotiating the app’s layout, and synchronizing their data with other devices.

Here is a brief synopsis:

- User Testing:

- Puts the product in users’ hands

- Collects direct feedback from users

- Evaluates the overall user experience and satisfaction

- Usability Testing:

- Assesses the user-friendliness of the product

- Focuses on ease of navigation and understanding

- Pinpoints areas of friction in the user interface

Although these two testing techniques have different uses, their ultimate goal is the same: to shape a product that effortlessly satisfies user wants and offers a great experience. Businesses may cut through the clutter and present products that really appeal to their users by carefully combining usability research with user experience. Within the symphony of product creation, they are the harmonic pair that finds the ideal chord of user happiness.

Let’s examine important variations between the approaches:

Testing Tip #1: Plan

Good testing requires effective planning. Regarding user testing, one should take four factors into account.

- Basis: Who, What, Where, When, and How Tested?

- Goals, main ideas, and strategies. The study should ascertain whether the selected approach helps the project to run as expected. Does it meet the expectations of the audience? At what times does it fall short? The strategy is formed by the product’s goal; the way it is carried out forms the tactics.

- User testing success measurement parameters:

- Potential situations and some questions to probe.

You should respond to the following questions before beginning usability testing:

- Find the extent of work required—that of the whole location, application, or product or part of it.

- Project issues or questions internal marketers cannot address; can visitors access crucial information from the main page?

- Timing, length of sessions, frequency of meetings, and the time needed for the participant to find the solution.

- Before and after the testing session, what inquiries should one ask participants?

- Operating system, browser, etc.; the number of users, types, how to find participants, the type of equipment, maybe the size and resolution of the monitor, operating system, browser, etc.;

- Data of both qualitative and quantitative nature should be gathered including mission time, percentage of errors, and successful completion percentage.

- Systems: A / B testing, use tracking, moderate or unmoderate interviews, etc.

- Errors both critical and non-fatal: variations from situations influencing the validity of the findings.

Testing Tip #2: Methods

For user testing, both moderated and unmoderated interviews perform nicely. The audience tells itself this whether they need a particular thought and whether the idea is practical.

Using the A/B test approach helps one to ascertain user preferences and attitudes toward particular aspects more conveniently.

One can find first testers on websites related to crowdsourcing. Later on, they would give the site owner regular comments as the good or service advances. On the same websites, prospective users can be presented an introduction video outlining who needs the promoted product and its applications. Offer then to place a first order. This kind of testing is advised right at project inception. If you discover in advance whether the intended startup will be of interest to the target audience, then it will be feasible to save part of the budget.

A new service can be tested on a set of consistent users prior to its public release. You won’t squander money on an introduction; you will be dealing with people who already trust the store and know the brand.

One can adjust these techniques for usability testing. The most highly productive ones consist in:

- Clickstream testing and oculography (eye-tracking) will help you pinpoint screen areas that draw most attention. Combining unmoderated or moderated interviewing with this approach works well.

- Research Diary: Testers spoke of utilizing the product over an extended length of time.

- When necessary, A/B tests help to enhance the current call-to- action form, project design, email list, and other components.

- An unmoderated or moderated interview shapes a visitor’s choice—a web page or a call to action. Review the first impression of visiting a site/application and assess the usability of products of like competitors.

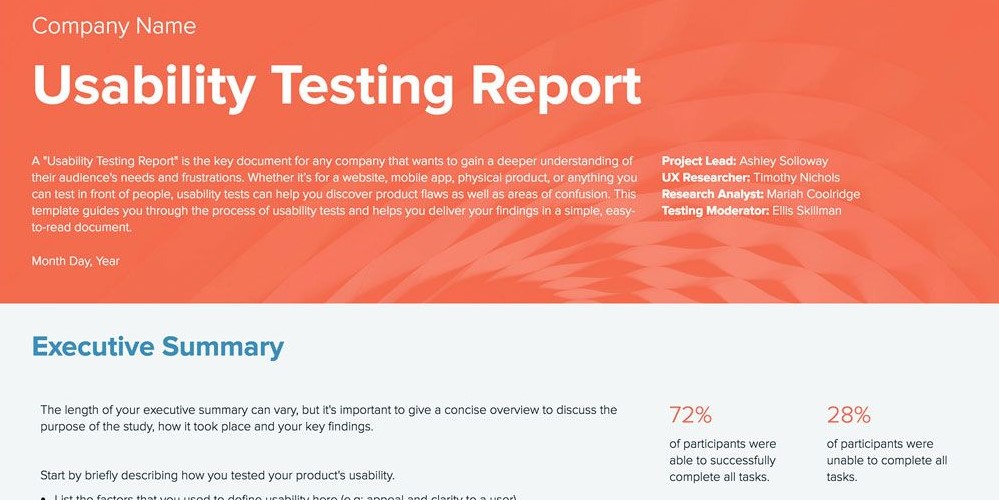

Testing Tip #3: Results

Following usability testing, developers have information on:

- The platform has appealing characteristics.

- Issues need initial repair first.

- Short overview for a next course of action.

A usability test report comprises of all test results, their analysis, and findings:

- User completion rate.

- Time each user spends throughout a test.

- The bounce rate for separate assignments.

- User comments about degree of contentment.

- Advice of testers: what has to be changed?

Systematized data on usability problems should be prioritized based on their relevance and influence on the success of the project, therefore guiding your choice. Practically, issues fall into five types based on degree: from essential to non-product impact.

In user testing, the team gathers findings in the process rather than at the conclusion. Completing a test successfully relies on constructive client comments. The marketer can thus obtain both the intended test result and entirely fresh, erratic knowledge on the product.

One of the most important aspects is the response segmentation. Marketers learn to divide one long test into multiple quick polls. In this scenario, the specialist gets intermediate findings and can modify the direction of the test depending on the understood issues till the process is finished.

Information for the final report should be organized and split by priority of problems; this can include atypical remarks and feedback from usability testers that could influence the enhancement of the service.

Testing Tip #4: Audience Test

For best results, find a group that either reflects your client base or fits your ideal user. For remote testing, several web sites have access to an international audience. You could choose your subjects depending on several criteria. For user tests, such solutions are fantastic.

Should you depend on guerilla tests and other personal approaches and cannot form a homogeneous test group, you should at least gather pertinent information (age, training, profession, computer/mobile use). Knowing this background helps you to assess any variations in the outcomes more sensibly.

Ask the right questions both before and after the test to acquire appropriate information.

Spend time brainstorming with your team and possible early beta testers to identify the ideal balance.

Testing Tip #5: What to Test

The success or completion rate gauges the mean number of people finishing a task. The most often used metric of usability is this one. The moderators of moderated tests note the success rate. Regarding unmoderated tests, the participants report themselves. Alternatively you can examine the session recordings.

To accurately document the real user experience, nevertheless, the success rate by itself is inadequate. You should thus also incorporate other measures, for instance:

- The quantity of mistakes: how many pointless mistakes or behaviors a test-takers committed? Considered a benchmark are 0.7 mistakes per user.)

- Task duration: The time shows how long a user takes to finish a test. There are no broad rules since the needs vary too greatly.

- Users can score job difficulty from 1 (very difficult) to 7 (very easy). The typical value is 4.8.

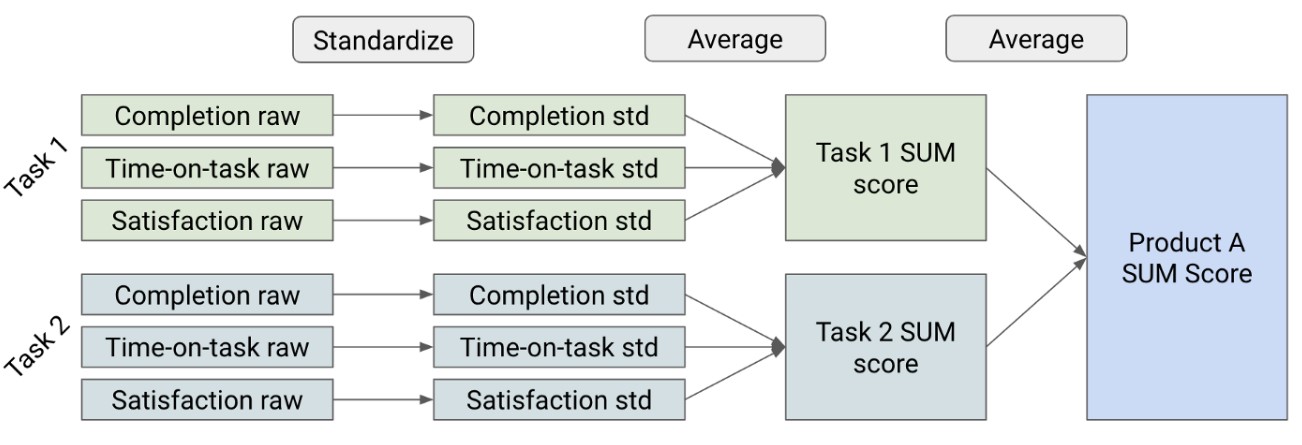

- Combining success rate, task time, and task complexity, the Single Usability Metric (SUM) shows the general usability. In several different sectors, the average value is 65%.

- Task satisfaction: After finishing a task, you can note user mood by means of a website survey.

Your picture of the experience of the test participants gets more thorough and complete the more metrics you generate.

First, create a goal. Your areas of interest are what? You are looking for what responses? Your chances of success rise if you have a specific aim. If you are creating a sales app, you could wish to see how simple purchasing products is.

Testing Tip #6: The Use Phase Test

The use phase makes rather clear sense. Understanding whether users need what you have to offer and whether it is simple for them to apply this or that solution calls for a user test. One might consider user tests as the starting point of the life cycle of a product. After you have a product concept, user testing is absolutely crucial. The usability test comes following the creation of a prototype or a design.

When should I thus schedule a user test? One practically always needs a user test. Observing the target group during a user test helps one to find significant insights and areas of improvement. A user test should ideally accompany the project’s whole running duration. A user test conducted throughout the planning and development stages can help to cut expenses. Even during and following the market introduction, a user test guarantees that the product satisfies the intended needs and specifications. Furthermore, a user test not only helps to create new items but also verifies the additional value of the developments throughout a relaunch. Thus, the user test supports agile product development always and helps to prevent main usability issues before they start.

At last

As we draw to a close our investigation, “User Testing vs. Usability Testing: What are the Differences?” we underline again the core of these unique but related approaches. While usability testing assesses the user-friendliness of a product, user testing helps us negotiate the ideas and perceptions of actual users interacting with a product – a vital component in producing a smooth user experience.

While programs like Hot Jar and UXtweak provide insightful analysis of usability, tools like PickFu and UserTesting.com let you effectively conduct user testing. Beyond this, though, let’s maximize your testing initiatives with Plerdy, the tool improving UX and SEO analysis. Acting as an executive in the field of testing, Plerdy lets you go back over user sessions, mark user events, and find UI frictions. Examining your stuff from all directions is like using a digital magnifying glass!

All set to confirm your thoughts and begin working on those problems? Allow us to start this road right now with a Plerdy free trial. Using this all-inclusive solution will help you to create more user retention and noticeably improve the efficiency of your product. Recall that often the road to success begins with knowledge and meeting of needs for your users!