Welcome to our extensive reference, “Top 15 Big Data Analytics Tools”.

These elite technologies serve as catalysts in the data-driven era of today, turning enormous amounts into useful insights. Driving you toward data-informed decisions, they are the motors running your company. These technologies are so revolutionary whether your field of work is healthcare professional seeing trends for innovative therapies or finance sector forecasting of market trends.

Here’s a taste of what these instruments can do:

- Simplifying Big data processing

- Improving Big Data Visualization

- Offering real-time data

One such instrument is specialist in UX and SEO analysis Plerdy. Plerdy guarantees a user-friendly experience and sharpens your SEO plan, so providing the ideal combination for success.

Discover the correct Big Data Analytics tool to drive your company to unprecedented heights and sink yourself into the dynamic universe of tools available. Thus, keep tuned and be ready to use big data’s potential!

What is Big Data Analytics?

One powerful tool turning unstructured, raw data into meaningful insights is big data analytics. Its strength is managing big data sets—often terabytes or petabytes in scope—that conventional technologies cannot handle. Here, size really counts and “big” is not hyperbole. Many businesses rely on big data analytics software to transform raw inputs into actionable intelligence.

Within the healthcare specialty, for example, it:

- Analyzes patient records in search of better treatment.

- Finds trends for fresh approaches to therapy.

- Predicts disease epidemics.

Big data analytics divides enormous data into manageable, useful bits by using sophisticated algorithms and advanced analytics tools. It presents a whole picture that enables companies to make quick, big data-driven decisions.

From customer behavior in e-commerce to improving predictive maintenance in manufacturing, it transforms sectors. Big data analytics is the foundation for innovation—a necessary instrument in the digital era of today. Use its ability to keep competitive, maximize operations, and propel expansion.

Different Types of Big Data Analytics

Today’s market offers a wide range of big data analytics products tailored to specific business functions. Big data analytics consists of multiple really powerful tools, not only one. Rather, it is broken down into four separate groups, each with a different function:

- Descriptive Analytics: Shows the “what.” Mining and aggregating data give a clear insight of past occurrences. Consider a retail company, for instance, knowing seasonal sales swings.

- Diagnostic Analytics: Probe “why” something happened. It looks for trends using probability, likelihoods, and distributions. Take an airline trying to figure out why flights are delayed, for instance.

- Predictive Analytics: Sees the ‘what next.'” Making use of statistical models and forecasting tools, it forecasts future opportunities. An insurance business estimating claim amounts, for instance.

- Prescriptive Analytics: Guides on the “how” to handle a problem. It bases decisions on predictive analytics’ outcomes. Imagine an e-commerce website suggesting goods based on browsing past.

Use the correct kind of big data analytics to equip your company with the tools to flourish in the digital terrain and differentiate yourself from the competitors.

The Importance of Big Data Analytics Tools

Unquestionably in the digital era of today, big data analytics tools have power. These tools sort big data sets to gather insights that support companies in making wise decisions.Basically, they translate an incomprehensible volume of data into logical, useful knowledge. Big data reporting tools help organizations visualize metrics and performance in real time.

Consider a niche internet merchant selling vintage eyewear, for example. Their complete big data analytics solution allows them to monitor consumer preferences, purchase trends, and behavior. Once examined, this data can help to maximize marketing plans, thereby increasing customer involvement and sales.

These are main reasons tools for big data analytics are indispensable:

- Enhanced Decision Making: Big data analysis tools translate complicated data into clear metrics so that companies may boldly make big data-driven decisions.

- Improved Customer Experience: Understanding consumer behavior helps companies to customize their products to fit consumer wants, so improving the general client experience.

- Risk Management: Big data tools can forecast company risks and market changes, therefore enabling businesses to adjust and remain strong in new environments.

- Operational Efficiency: By automating repetitive work and stressing areas for development, they simplify company operations.

Ultimately, big data analytics technologies are essential gears in the modern company’s machine. In a very competitive digital market, they create chances for development, creativity, and success by opening doors to once secret discoveries.

Factors to Consider when Choosing Big Data Analytics Tools

Selecting the correct big data analytics technology can help to determine the course of your company. Although these instruments can turn your big data into a wealth of insights, you should choose one that fits your requirements.

Let us examine a specialty company—a boutique vineyard. The vineyard wants to better know the tastes of its patrons and modify its output accordingly. Here’s what they might think of while choosing a big data analytics tool:

- Scalability: The winery’s data grows together with its expansion. The selected instrument should effectively manage growing data amounts without sacrificing performance.

- Big Data Processing Speed: Quick decisions are absolutely vital in the cutthroat wine business. The instrument should act fast to provide real-time insights so one can keep one step ahead.

- Ease of Use: The instrument has to have an understandable interface. It’s about using the features rather than about having the most ones.

- Integration Capacity: From supply chain logistics to customer relationship management, the product should fit quite nicely with current systems.

- Security: Strong security elements are non-negotiable in a time when major data breaches are common.

Choosing a big data analytics solution is not a one-size-fits-all matter. Rather, it’s about discovering a tool fit for your operational structure, corporate objectives, and data complexity. Modern big data analytics platforms combine scalability, integration, and real-time capabilities. The tools of big data serve industries across healthcare, finance, retail, and logistics.

List of 15 Top Big Data Analytics Tools in 2025

An in-depth summary of the most sophisticated systems accessible for handling and analyzing vast amounts of data is given by the “List of 15 Top Big Data Analytics Tools”. Every tool is meant to solve particular analytical problems and has special qualities that make them vital for companies trying to use big data. If you’re wondering what are some big data tools that power digital transformation, this list is your guide. Examining these tools can help companies find the best fit to improve their data-driven initiatives and acquire competitive understanding.

| Tool | Key Features | Pricing |

|---|---|---|

| Hadoop | Distributed storage, data processing, fault tolerance, and scalability. | Free (open-source), enterprise support varies by provider. |

| Apache Spark | In-memory processing, real-time analytics, machine learning, and stream processing. | Free (open-source), enterprise support varies by provider. |

| Flink | Real-time stream processing, batch processing, fault-tolerance, and stateful computations. | Free (open-source), enterprise support varies by provider. |

| Storm | Real-time processing, fault-tolerant, distributed computing, and scalability. | Free (open-source), enterprise support varies by provider. |

| ElasticSearch | Full-text search, distributed search engine, real-time data indexing, and analytics. | Free (open-source), hosted plans start at $16/month. |

| Cassandra | Distributed NoSQL database, scalability, high availability, and fault tolerance. | Free (open-source), managed service options available from cloud providers. |

| MongoDB | NoSQL database, document-based storage, scalability, and real-time analytics. | Free (open-source), Atlas hosted plans start at $57/month for dedicated clusters. |

| Hive | SQL-like querying for Hadoop, data warehousing, and scalability. | Free (open-source), enterprise support varies by provider. |

| Tableau | Data visualization, dashboards, reporting, and real-time analytics. | Starts at $70/user/month for Tableau Creator, Viewer starts at $15/user/month. |

| PowerBI | Data visualization, dashboards, reporting, and real-time analytics. | Power BI Pro $9.99/user/month, Power BI Premium starts at $20/user/month. |

| QlikView | Data visualization, dashboards, in-memory processing, and business intelligence. | Pricing available upon request, typically custom for enterprise needs. |

| RapidMiner | Data science platform, machine learning, predictive analytics, and data mining. | Free for small data sets, paid plans start at $2500/year. |

| KNIME | Data analytics, machine learning, workflow automation, and ETL. | Free (open-source), commercial support starts at €1000/year. |

| Talend | Data integration, ETL, cloud and big data tools, and real-time analytics. | Starts at $1,170/month for Talend Data Fabric. |

| Google BigQuery | Fully managed data warehouse, real-time analytics, scalability, and machine learning. | Free tier available; pricing based on usage with $5 per TB processed. |

Some of the top big data analytics companies contribute actively to open-source innovation.

1. Hadoop

Big data analytics tool Hadoop, open-source, has transformed our approach to big data sets. Hadoop can manage data across numerous servers using its distributed storage and processing capacity, therefore breaking down silos and uniting big data access.

Consider an energy business trying to maximize its renewable resources. They may examine enormous volumes of meteorological data using Hadoop to forecast wind patterns, improve turbine performance, and maximize electricity output.

These main characteristics help Hadoop to be a top-notch big data analytics tool:

- Distributed Processing: Hadoop runs big amounts of data over a cluster of computers. This distribution guarantees data redundancy and speeds up processing; should one computer fail, the data remains safe.

- Scalability: For companies with changing data needs, Hadoop’s quick and easy scaling up and down capability is invaluable.

- Fault Tolerance: The system is made to automatically distribute work to other nodes should a node fail, therefore guaranteeing flawless performance.

- Cost-Effectiveness: Since big data analytics technologies are open-source, Hadoop presents a reasonably affordable way for companies to handle their big data requirements.

- Flexibility: Hadoop can manage both structured and unstructured data, allowing companies to examine several big data kinds for all-encompassing understanding.

Hadoop is essentially a strong platform for big data analytics that provides companies in many different fields a scalable, adaptable, reasonably priced solution.

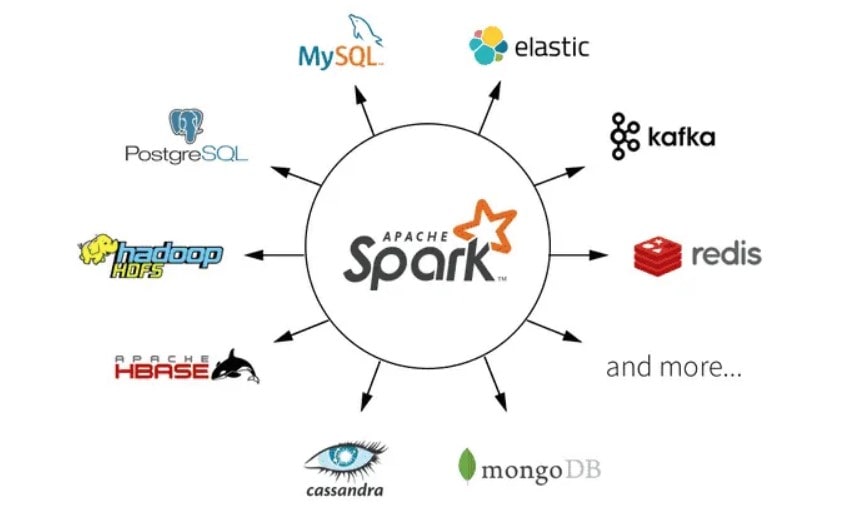

2. Apache Spark

Powerhouse open-source big data analytics tool Apache Spark is transforming data handling. Celebrated for speed and simplicity of use, Spark offers companies a complete big data analysis and machine learning tool.

Consider a specialist internet news source. Using Spark, they could track user behavior in real time, spot hot subjects, and customize their material to fit readers’ tastes.

Apache Spark’s major characteristics are compiled here:

- Lightning Fast Processing: Lightning Spark’s in-memory computing features hasten huge data processing speeds, ideal for uses needing real-time insights.

- Ease of Use: Spark gives developers a flexible platform supporting many programming languages, including Java, Scala, and Python.

- Advanced Analytics: Built-in SQL, streaming, and machine learning modules let Spark offer an all-in-one platform for big data analytics.

- Fault Tolerance: Spark’s strong distributed datasets (RDDs) offer a fault tolerance technique whereby the system may readily recover lost data.

- Compatibility: Spark connects with Hadoop and its ecosystem so companies may leverage already available Hadoop data and tools.

Among tools for big data analytics, Apache Spark is a powerful participant. Using its strengths will help companies stay competitive in the digital scene, create faster insights, and make wise decisions.

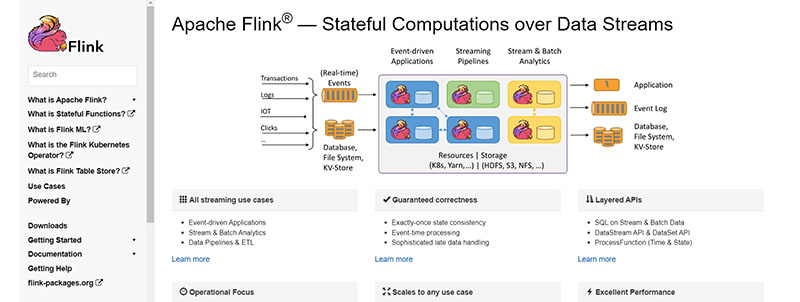

3. Flink

Among big data analytics tools, Flink distinguishes itself with remarkable stream processing capacity. A popular among companies handling real-time big data analysis, Flink handles data as an open-source platform at rapid speed.

Imagine a boutique finance business providing individualized investing guidance. Using Flink’s capability, they can examine live market data, spot trends, and provide clients with real-time advice.

Here is what distinguishes Flink:

- Real-time Stream Processing: Flink’s strength is its capacity to process continuous data streams in real-time, ideal for companies needing instant insights.

- Event Time Processing: Unlike many big data technologies, Flink can analyze data depending on the time the events happened rather than only when they arrived to the system.

- Fault Tolerance: Strong fault tolerance systems provided by Flink help to prevent significant data loss.

- Scalability: Flink fits companies of all kinds since it scales easily to manage big data loads.

- Integration: Flink runs perfectly on various well-known big data systems as Hadoop.

All things considered, Flink presents a strong answer for companies using real-time big data analytics. Its special qualities help businesses to remain nimble and responsive in a world driven by data.

4. Storm

In the field of real-time big data processing, open-source big data analytics tool Storm excels especially. Well-known for its dependability and strength, Storm provides a stable ground for companies needing quick data analysis.

Think of a specialist weather predicting company. Storm might be used to analyze real-time, precise forecasts created from processing live atmospheric data, therefore informing their audience.

Here’s a closer look at the factors driving Storm’s popularity as a big data analytics tool:

- Real-Time Processing: Storm shines in real-time processing enormous data streams, offering instantaneous insights for quick decisions.

- Fault Tolerance: Storm’s fault tolerance means that data processing go on even when a node fails—it’s meant to guarantee that none of any data is lost.

- Scalability: Storm provides companies with flexibility as they expand since it can readily scale up to manage vast data volumes.

- Ease of Use: Storm provides a user-friendly environment for developers supported by several programming languages.

- Integration: Storm lets companies use current data resources by being easily combined with other systems.

For companies looking for real-time big data analytics, Storm offers a quite potent answer. Its strong and dependable design helps companies to get quick understanding from their vast data, so arming them to make wise selections.

5. ElasticSearch

Being an open-source, scalable search and analytics engine, ElasticSearch excels as a universal tool. Celebrated for speed and efficiency, ElasticSearch helps companies quickly negotiate big data lakes.

Consider a specialty e-commerce store. ElasticSearch helps them to provide strong, real-time product search capability, therefore improving the shopping experience for their consumers.

ElasticSearch boasts several important characteristics:

- Full-Text Search: ElasticSearch shines at managing full-text searches, which helps companies locate particular data from their big data repositories more quickly.

- Scalability: ElasticSearch can scale horizontally to address rising data quantities, therefore enabling companies to expand free from concern about big data management.

- Real-Time Analytics: ElasticSearch gives companies real-time analytics so they may make swift decisions based on instant knowledge.

- Distributed Nature: ElasticSearch runs in a distributed environment, therefore improving speed and efficiency of data processing.

- Integration: ElasticSearch provides a whole analytics platform that blends nicely with well-known big data tools like Logstash and Kibana.

ElasticSearch, all things considered, provides a strong, scalable answer for companies having to sort through big amounts of data. Its complete search and analytics features help companies to make wise, quick decisions based on data analysis.

6. Cassandra

Considered for its scalability and resilience in handling enormous volumes of structured data, Cassandra is an open-source big data analytics platform. For companies whose big data load calls for a consistent, distributed database solution, it is therefore ideal.

Think of a specialty inside the telephone sector, for example. Cassandra guarantees flawless data administration and analysis, therefore helping a telecom operator to manage billions of call records every day.

Cassandra distinguishes itself within the big data tool set for the following:

- Fault Tolerance: Cassandra guarantees no one point of failure, hence it is a strong option for important data operations.

- Scalability: Cassandra offers linear scalability, so enabling companies to grow their data operations without any trouble.

- Flexible Data Storage: From organized to semi-structured, Cassandra can manage many forms of big data, therefore offering flexibility for many data requirements.

- Data Replication: Cassandra provides multi-datacenter replication, therefore guaranteeing data availability even in the event of a data center outage.

- High Performance: Cassandra has quick read and write capability, which is crucial for companies handling vast amounts of data.

Cassandra offers companies struggling with big, complicated data loads a strong and scalable solution. Its capacity to manage several data kinds and guarantee good performance makes it a reliable partner for companies trying to reveal knowledge from their data. Cassandra is one of the most reliable big data management tools available for enterprise-grade systems.

7. MongoDB

Popular big data analytics tool MongoDB runs on a document-oriented database concept. This creative method of big data storage and retrieval fits companies dealing with unorganized data.

Imagine this in the medical field. Different patient records have to be kept and examined in a hospital. From text records to medical images, MongoDB lets them efficiently manage this data by supporting many data kinds.

MongoDB sets itself out with the following characteristics:

- Document-Oriented Storage: Document-oriented information is particularly well-suited for storage, retrieval, and processing; it gives an advantage in managing unstructured data.

- Horizontal Scalability: As your big data increases, MongoDB can expand with your data and let you add more machines.

- High Performance: Especially appropriate for managing vast amounts of data, high performance data persistence is offered by this solution.

- Flexible Big Data Model: Schema-less data model of MongoDB offers excellent adaptability for developing data structures.

- Indexing: Its capacity to produce secondary indexes guarantees best searches and enhances general performance.

MongoDB’s capability to transform unstructured data into useful insights allows companies to For companies using big data analytics, its document-oriented approach, scalability, and great performance are indispensible. MongoDB’s adaptability and indexing features add even more appeal and show it to be a dependable option in big data analytics systems.

8. Hive

An indispensable instrument in the terrain of big data analytics, hive takes front stage in managing big datasets and streamlining difficult searches. Designed by Apache, Hive turns SQL-like searches into MapReduce operations, therefore enabling big data analytics to be more accessible. The tools of big data analytics like Hive streamline the complexity of querying massive data volumes.

Think of a retail behemoth with millions of transactions occurring at several sites. They have to look at consumer buying patterns, spot trends, and project sales. Hive simplifies data querying and analysis to make this enormous job doable.

Hive excels in these areas:

- Ease of Use: With HiveQL, a SQL-like language, analysts may query data without creating intricate MapReduce tasks.

- Scalability: Hive can effectively manage suitable data stores like Amazon S3 as well as big datasets in Hadoop’s HDFS.

- Extensibility: Hive’s user-defined functions (UDFs) let you extend its capability and execute unique data operations.

- Compatibility: Hive supports ORC, JSON, and CSV among other data forms.

Hive allows companies to explore their enormous data stores and find insights that might drive corporate expansion. For companies using big data analytics, its simplicity, scalability, and flexibility make it perfect. Furthermore, Hive is a flexible tool in big data since it can manage several data types, so opening the path for effective big data analytics.

9. Tableau

Leading big data analytics platform Tableau helps companies to turn complicated, raw data into understandable, practical insights. Additionally providing a suite of solutions to meet various data visualization demands is this strong platform.

Consider a hospital trying to raise patient outcomes. Tableau helps them to see patient data, identify trends, and make wise judgments, therefore improving the general standard of treatment.

Tableau distinguishes itself with its:

- Interactivity: Users of interactivity can delve into data, investigate several points of view, and probe details.

- Accessibility: Tableau Public lets anyone share data visualizations online.

- Integration: Tableau harmonizes with numerous data sources—from Excel to SQL servers.

- Flexibility: From marketing to finance, it meets different corporate divisions.

Tableau helps companies to make decisions based on facts. Its interactivity helps people interact with data in addition to see it. Its simplicity extends the reach of big data analytics and qualifies as a perfect tool for companies giving data democratization first priority. Tableau guarantees that data silos never become a problem by combining with several data sources. Its adaptability further emphasizes its value, which is why several company divisions turn to it first. Tableau is really a big data maestro bringing harmony to data anarchy.

10. PowerBI

Strong big data analytics tool PowerBI lets companies translate convoluted, unprocessed data into clear, useful insights. Designed for exploring your big data and extracting business insights, this complete range of business analytics tools allows you.

Think of a logistics firm trying to simplify its supplier chain. Using PowerBI, they can see supply chain data, spot problems, and create plans to improve performance.

PowerBI’s main products are:

- Real-time Dashboards: Create live dashboards and reports using current, up-to-minute data.

- Data Connectivity: Pulls data from hundreds of sources, cloud-based or on-site depending on data connectivity.

- Collaboration: Cooperation lets the company share dashboards and reports all around.

- Customizing: Gives you the freedom to design original images appropriate for your situation.

The agent pushing companies toward data-driven decision-making is PowerBI. Its real-time dashboards help customers to remain current with the most recent corporate statistics. The function of data connectivity guarantees flawless access to and visualization of data from many sources. Working together helps one to easily share ideas with team members and promote a data-driven culture inside the company. The program is therefore quite flexible since the customizing feature lets users fit images to their needs. As a big data conductor, PowerBI arranges the symphony of data to bring harmony to the din and let companies reach the high notes.

11. QlikView

Prominent big data analytics platform QlikView helps companies turn unprocessed data into insightful analysis. Its special associative data indexing engine helps to create flexible, interactive displays by interpreting data from several sources.

Consider a doctor trying to handle patient records. They could design an interactive dashboard, see trends, and simplify their patient management system with QlikView.

Important QlikView qualities consist in:

- Associative Data Engine: Enables consumers to uncover latent trends and patterns using associative data engine.

- Interactive dashboards: Help to create dynamic images and reports.

- Data integration: Creates a single view from data taken from several sources.

- Secure, Governed Access: Guarantees of data security and integrity come from safe, governed access.

With its associative data engine, which automatically finds correlations inside data sets and exposes unanticipated insights, QlikView is unique. Its dynamic dashboards help users organically and easily navigate and control data. The element of data integration guarantees users not to lose themselves in the maze of big data by simplifying the data terrain. Furthermore, controlled, secure access of QlikView guarantees that big data stays private.

QlikView closes the distance separating big data from decision-making. It is the lighthouse amid an ocean of data, the compass guiding companies over the perilous landscape of big data. Under the direction of QlikView, companies may negotiate big amounts of data and get desired results.

12. RapidMiner

With its end-to-end data science platform, RapidMiner—a fundamental tool in big data analytics—takes the stage. It gracefully creates paths across the rugged terrain of big data so that companies may quickly create useful insights.

Think of an e-commerce business trying to keep customers the best. RapidMiner intervenes, sorting consumer data, seeing trends, and finally offering concrete ideas to increase client loyalty.

RapidMiner shines on qualities like:

- Automated Big Data Preparation: simplifies formatting and data purification techniques.

- Rich Algorithm Set: offers several approaches of machine learning.

- Model Validation: guarantees dependability and correctness of prediction models.

- Easy Deployment: Simple deployment lets models be smoothly put into use.

RapidMiner starts with automating the data preparation, so offering a strong basis for correct analytics. It then provides a wide range of machine learning techniques to help companies to leverage predictive analytics. The instrument guarantees the dependability of these models by means of a strong validation procedure. At last, its simple deployment capability lets companies rapidly use these models, hence supporting real-time data-driven decision-making systems.

RapidMiner illuminates the road from big data to corporate value. It’s more than simply a tool; it’s a reliable guide enabling companies to boldly and fast negotiate the big data terrain.

13. KNIME

KNIME takes the stage in the great performance of big data. With its open-source, intuitive platform, this big data analytic tool provides value. KNIME provides a strong platform for data professionals to carry out their data-driven symphonies, so turning unprocessed data into insightful analysis.

For patient data analysis, for example, KNIME allows a healthcare company to spot trends and base decisions on data to raise patient outcomes.

The salient characteristics of KNIME are as follows:

- Simple Interface: Makes drag-and-drop data manipulation possible.

- Scalability: Allows one to easily handle tiny to big datasets equally.

- Integration: Perfectly combines with many sources and data forms.

- Flexible: Provides no-coding as well as coding choices.

Users of KNIME’s easy-to-use interface simply drag and drop components to generate processes. Its scalability lets it effectively handle tiny to big collections. KNIME harmonizes with many sources and data types via its integration capability, therefore offering a single data view. Providing both coding and non-coding choices, it appeals to a wide spectrum of consumers ranging in experience from beginners to specialists.

KNIME is essentially the conductor of the big data concert—orchestrating the performance from raw data to smart insights and directing data professionals on a flawless path over the data terrain. KNIME is one of the most effective big data analytics tools open source communities trust.

14. Talend

In the big terrain of big data, Talend, a data integration and management tool, is a dependable and quick data analytic instrument. Simplifying challenging data settings helps companies to release the potential of data.

Consider an online merchant here. Talend allows them to easily combine client data across several platforms, so providing tailored experiences and increasing revenues.

Talend has key strengths including:

- Data integration: Brings several data sources into a whole picture.

- Data Quality: Guarantees of clean, consistent data for accurate analytics depend on this.

- Scalability: Managers both big and small datasets with success.

- Real-time processing: Provides rapid insights to support fast decisions.

Talend’s data integration feature lets companies combine several data sources to produce an all-encompassing perspective that improves knowledge and decision-making. Its real-time processing capacity helps to enable quick, fact-based decision-making.

Talend is essentially a consistent Sherpa on the road through big data: combining, cleansing, and evaluating data to provide useful insights. It is therefore the ideal friend for companies trying to move over the big data terrain with simplicity and agility. Talend stands out among big data analytic tools for its seamless integration and real-time performance.

15. Google BigQuery

With Google Big Query, a sophisticated data warehouse tool providing lightning-fast data analysis, harness the potential of big data. This Google Cloud tool drives insights that could revolutionize your company by let you quickly scan, analyze, and visualize vast amounts of data.

Consider yourself a global e-commerce platform serving consumers. Google Big Query lets you quickly sort through billions of transactions, spotting trends in sales and grasping consumer behavior.

Its main characteristics include:

- Full Managed Service: Automated data infrastructure and resource management define a whole managed service.

- Real-time analytics: Examines constantly flowing big data for immediate insights.

- High scalability: Allows one to quickly manage any kind of data.

- AI Integration: Designed with built-in machine learning for predictive analytics.

Furthermore, Google Big Query’s great scalability easily manages data of any volume, therefore enabling your company to expand. Predictive analytics are easy with built-in machine learning, which helps you to make wise decisions and trend forecasts.

The titan of big data analytics, Google Big Query is your friend in transforming vast amounts of data into insightful analysis therefore opening the path for strategic, data-driven decisions. Google BigQuery is frequently listed among the best big data analytics software options on the market.

Bottom Line

Analytics tools for big data empower companies to turn massive datasets into strategic opportunities. As we draw to a close our investigation of the “Top 15 Big Data Analytics Tools,” it is abundantly evident how creatively companies now use big data. Furthermore, they have been great help for companies to gain important understanding from big amounts of data.

These utensils assist:

- Improve big data handling.

- Create amazing visualizations.

- Provide real-time stats.

Tools big data analytics enable precise, scalable, and secure insight generation for modern enterprises.

The correct tool will rely on your particular requirements; it can be another from our extensive list or a powerhouse like Plerdy, which shines in UX and SEO analysis. With these instruments at hand, your company’s operations could be transformed in great measure.

Recall that developing these skills calls for time, work, and repetition. Thus, think about enrolling in courses or tutorials or perhaps earning certificates. Then, explore the tools provided by suppliers like Plerdy or ask for a demo to have a first-hand impression of them.

It’s time to use big data analytics tools to release the potential of your company. Plerdy is waiting for the chance to travel this fascinating trip alongside you. With analytic tools in big data like Plerdy, your company can achieve both technical accuracy and marketing impact. Try Plerdy right now to get big data analytics excellence!